MUSIC ARCHITECTURE

ArchiMusic 3D

CHIMUSIC3D: REAL-TIME RECIPROCAL TRANSFORMATIONS BETWEEN MUSIC AND REFINED ARCHITECTURAL DESIGN

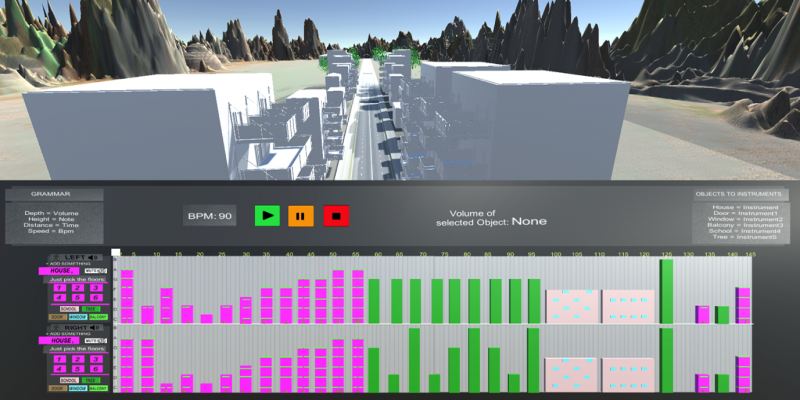

Many of the recently digitally-crafted architectural design tools for urban environments fail to capture aspects of an urban design which are both aesthetic as well as functional. This thesis presents Archimusic3D, a platform implemented in the Unity development engine that offers: First of all, the ability to view and convert a 3D urban street to music based on a specific grammar of converting architectural elements to musical elements, secondly, the ability to transform this music in order to “harmonize” it based on musical rules and finally, the prospect of converting back the aesthetically and harmonically “corrected” musical piece to a newly refined street or urban design.

The presented platform comprises of three scenes, which compile the three main parts of the system’s interface; e.g., the 3D scene, the Digital Audio Workstation (DAW) scene and the TouchOSC mobile controller. While interacting with the 3D scene, the user is able to navigate a 3D street in order to observe the 3D graphical depiction of an urban environment. second scene is the implementation of a Digital Audio Workstation (DAW) program, in which the user is able to see the musical footprint of the street printed as a MIDI (Musical Instrument Digital Interface) file in order to listen to it and process it. Processing is conducted via a third tool representing a TouchOSC mobile controller, which is a virtual mobile console containing faders, potentiometers and buttons. When the users converts the 3D scene to a musical footprint, they submit the reciprocal transformations which may be applied to the musical part and then, they are able to navigate a newly refined street.

Based on a grammar provided by the Digital Media Lab of the School of Architecture, Technical University of Crete which connects musical with architectural elements, the result is the musical footprint of a street. The urban designer is assisted in 1) identifying urban dissonances, 2) refining their design using musical rules and 3) presenting the output both visually and acoustically.

Fotis Giariskanis, Katerina Mania, Panos Parthenios

SURREAL team of the TUC/MUSIC lab + DMLab